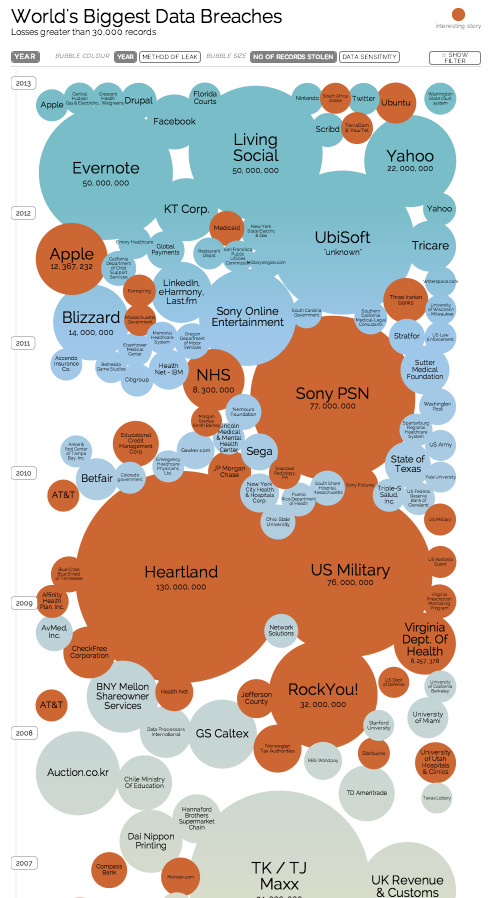

2016 was a year of massive data security breaches. Targets included Yahoo, Verizon, and the Clinton campaign, among others. Amid the alarm, cybersecurity professionals are declaring the simple numerical password — well, passé. A survey of 600 security experts sponsored by mobile ID provider Telesign revealed that 69% of the respondents did not think passwords provide enough security.

In response to the mounting concern over data protection, 72% of businesses plan to phase out passwords entirely by 2025, foregoing them in favor of more secure alternatives such as biometric scanners and two-factor authentication.

But while biometrics and 2FA are certainly more difficult to crack than simple numerical combinations they are still not secure enough for some. They still possess a lingering vulnerability: they cannot detect when a user is being coerced to authenticate, either by typing in a code or placing their finger on a biometric scanner.

To address this vulnerability, researchers have started proposing designs for coercion-resistant passwords. A team from Stanford University has devised a proof-of-concept model for a system that issues subliminal passwords to users based on an individual 30-40 minute “training session” resembling a video game. Users of the system can never reveal their passwords — even under threat of coercion — because they simply do not know them. Additionally, researchers at California State Polytechnic University Pomona have developed an authentication system that authenticates based on user’s subconscious physiological responses to music samples. If the system detects duress, possibly resulting from the threat of coercion, during the sample designed to induce subconscious relaxation, it refuses to grant access.

So far, experts intend these coercion-resistant password systems for government or commercial use — to restrict access to top-secret government data or sensitive aggregate financial information, for example. In this respect, they might serve to considerably enhance government and corporate data security protocols.

But there is little reason to assume that these emerging technologies, once introduced, will not be subject to function creep, particularly amid the growing public demand for more secure personal data protection. Much like encryption technology, coercion-resistant password systems may eventually become available to citizens to incorporate into their personal data protection protocols.

The proliferation of coercion-resistant password systems would pose a significant challenge for law enforcement as well as for the courts that has thus far been overlooked: the coercion-resistant password would effectively nullify government-requested compelled decryption.

Courts at the district, state and federal levels, are encountering compelled decryption cases with increasing frequency, because private citizens have been using encryption technology to protect their personal electronic devices more frequently. As of now, encrypted devices are virtually impossible to access without knowing the encryption password. As a result, when law enforcement now seizes an electronic device during an investigation and finds itself unable to access the device’s contents because of an encryption lock, they typically appeal to the courts to compel the suspect to unlock the device.

The courts do not always compel decryption. Some courts have sided with the government and compelled the suspect to decrypt, while others have held that compelled decryption violates the suspect’s Fifth Amendment privilege against self-incrimination. But nonetheless, law enforcement has thus far had the recourse of appealing to the courts to compel a suspect to decrypt a device when they have been unable to access the device’s contents through independent investigative means. Coercion-resistant password systems, however, would void court-ordered compelled decryption. With the Stanford design, the user could not consciously recall the password required to unlock the device. With the Cal Poly system, the user would not be able control his or her subconscious physiological response under the duress of being compelled to decrypt, and the system would deny access because of detected coercion.

What is the proper balance between law enforcement’s investigatory privilege and the individual’s right to privacy and privilege against self-incrimination? How will novel technologies, such as next-generation encryption technology and coercion-resistant password systems, shift that balance?

References:

“Beyond the Password: The Future of Account Security,” Lawless Research Report, sponsored by Telesign, 2016.

Gareth Morgan, “Scientists Mimic Guitar Hero to Create Subliminal Passwords for Coercion-Proof Security” V3.co.uk, 2012.

Max Wolotsky, Mohammad Husain, and Elisha Choe. “Chill-Pass: Using Neuro-Physiological Responses to Chill Music to Defeat Coercion Attacks.” arXiv preprint arXiv:1605.01072 (2016).

Val Van Brocklin, “4 Court Cases on Decryption and the Fifth Amendment,” PoliceOne.com.